Building an AI-powered predicted exam paper feature

Internal hackathons are a strange beast. You're building alongside the same people you ship with every day, but the rules are different. No sprint planning. No PRDs. No "let's align on scope." Just a weekend, a loose idea, and permission to move fast without asking anyone.

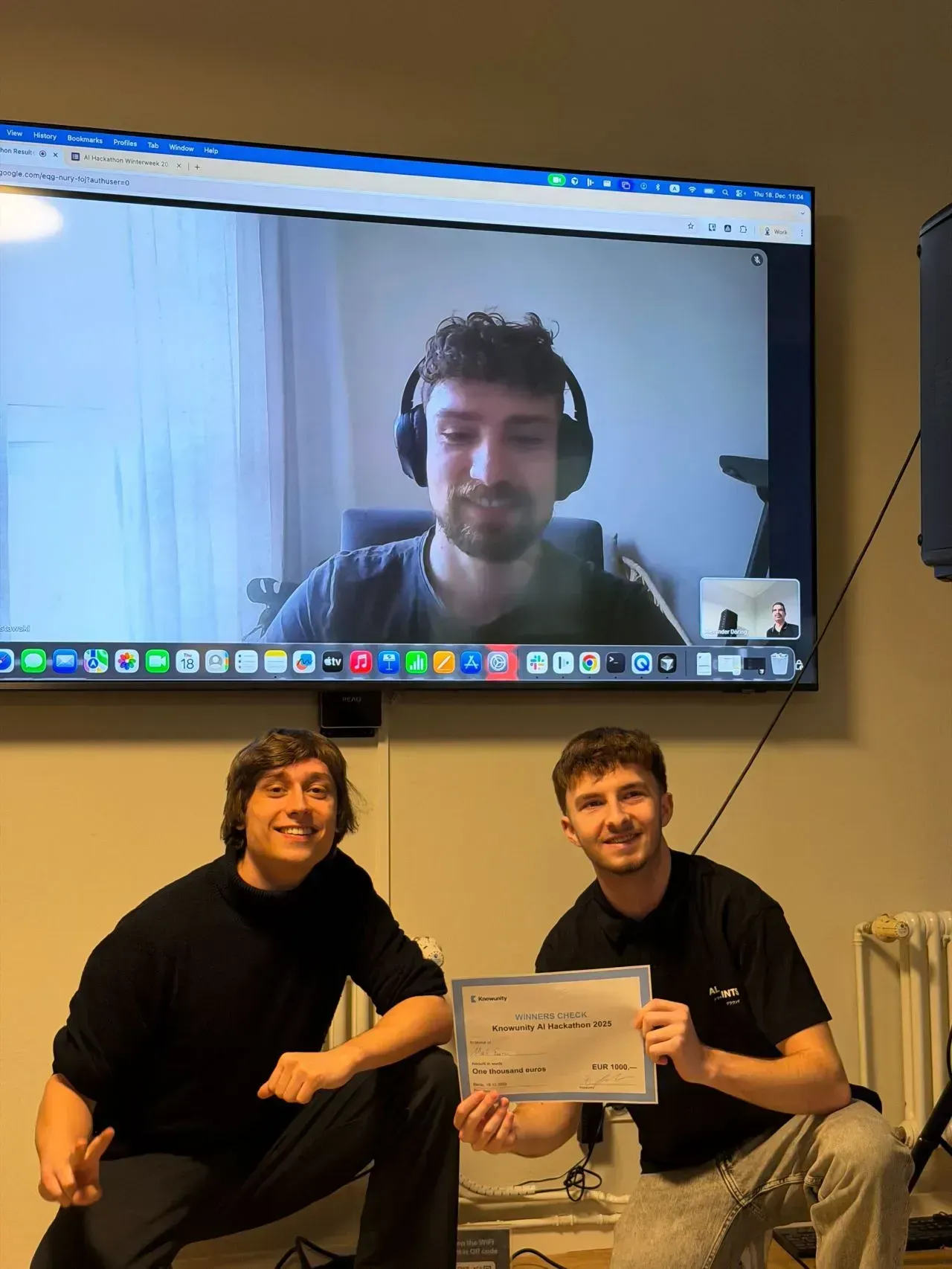

At Knowunity's internal winter hackathon, our team built something that's since become a real feature: AI-powered predicted papers for students across our launched markets.

What predicted papers actually are

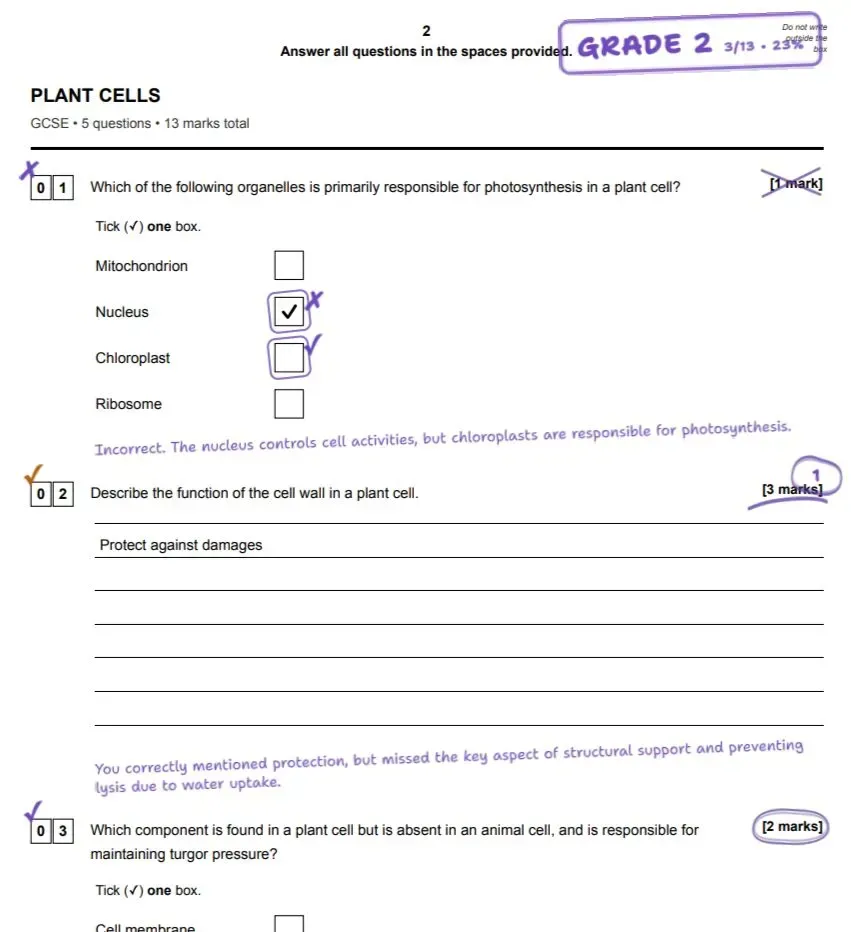

The concept is straightforward. Exam boards — GCSEs in the UK, for example — follow patterns. Certain topics recur at predictable intervals. Certain question formats repeat. Students and teachers have always tried to spot these patterns manually, but it's time-consuming and inherently limited by how many past papers one person can realistically analyse.

We automated that. By feeding historical exam papers into an AI pipeline, we can surface recurring themes, identify likely topic areas for upcoming exams, and generate predicted papers that give students a more targeted, data-driven approach to revision.

The output quality genuinely surprised us. The predicted papers weren't just technically competent — they were actually useful in a way that felt immediately obvious when you saw them next to real past papers.

How AI tools changed the build process

The feature itself is interesting, but what stood out most to me was how fast we built it.

We leaned heavily on AI-assisted development tools, particularly Cursor, throughout the hackathon. If you haven't used Cursor, the short version is that it integrates AI directly into your editor in a way that meaningfully accelerates prototyping and iteration. Not in a "write my code for me" way — more in a "remove the friction between having an idea and testing it" way.

For a hackathon context, where speed is everything and polish is secondary, this was transformative. Tasks that would normally eat hours — scaffolding boilerplate, writing data parsing logic, debugging edge cases in unfamiliar APIs — got compressed significantly. We spent more time thinking about the product and less time fighting the implementation.

This is worth calling out because it reflects a broader shift in how software gets built. AI in the development workflow isn't just about making smarter end products. It's about dramatically faster execution. The gap between "we had an idea" and "we have a working prototype" is shrinking in a way that has real implications for how teams prioritise, experiment, and ship.

What happens next

The predicted papers feature has moved beyond hackathon prototype into a real part of the Knowunity product. Students across our markets can use it to make their revision more efficient, more targeted, and ultimately more effective.

For me personally, the hackathon reinforced something I keep coming back to: the best way to validate an idea is to build it. Not to write a spec. Not to run a survey. Build the thing, put it in front of users, and see what happens. The tools available now make that loop faster than ever, and I'm excited to see how that continues to evolve.